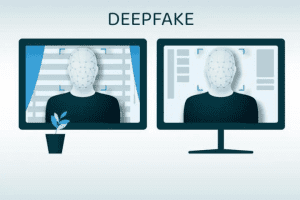

The idea of flawless reproduction in a future where your online identity is linked to you directly is alarming. But that’s exactly what we’re up against now that deep fake technology has arrived.

What are the risks of deepfakes as technology grows cheaper and easier to use? Furthermore, how can you tell the difference between a deepfake and a genuine thing?

What Is a Deepfake, Exactly?

A deepfake is a term used to describe media in which a person’s likeness is replaced with that of someone else. The name is a combination of the words “deep learning” and “fake,” and it refers to the use of machine learning algorithms and artificial intelligence to create realistic-yet-artificial media.

A face placed onto another model is the most basic example. Deepfake technology, at its most dangerous, sews unwary people into fake pornographic videos, phony news, hoaxes, and more.

Deepfakes: What Are the Risks?

Fake photographs have existed for as long as anybody can remember. Trying to figure out what’s real and what’s not has become a frequent part of life, especially since the rise of digital media. Deepfake technology, on the other hand, causes complications by providing exceptional accuracy to fake photos and fake movies.

A stock market fall might be triggered by a deepfake video portraying a large firm or bank CEO making a damning statement. It is, once again, severe. However, while human individuals can inspect and verify a video, global markets react quickly to news, and automated selloffs do occur.

Another factor to think about is volume. As deepfake material becomes more affordable to produce, it opens the door to massive amounts of deepfake content from the same individual, focusing on presenting the same fake message in a variety of tones, settings, styles, and other factors.

You must realize that deepfakes will become exceedingly realistic as a result of hoax material. So much so that, regardless of the content, people will begin to doubt whether a video is real or not.

What’s to stop someone from declaring, “It’s a deepfake, it’s false evidence,” if the only evidence is a video? What if, on the other hand, you planted deepfake video proof for someone to find?

Deepfake content posing as thought leaders has already occurred in multiple cases. High-ranking positions in strategic organizations are detailed on LinkedIn and Twitter profiles, however, these people do not exist and were most likely created using deepfake technology.

However, this isn’t a problem exclusive to deepfakes. Governments, espionage networks, and companies have utilized phony profiles and personalities to acquire information, advance agendas, and manipulate people since the dawn of time.

When it comes to security, social engineering is already a problem. People desire to have faith in others. It is in our nature. However, that trust can lead to data theft, security breaches, and other issues. Social engineering frequently necessitates actual touch, whether over the phone, via video call, or elsewhere.

Consider the possibility that someone could utilize deepfake technology to impersonate a director in order to obtain access to security codes or other confidential information. In such a situation, a flood of deepfake scams might ensue.

Deepfakes: How to Spot and Detect Them

Knowing how to recognize a deepfake is becoming increasingly crucial as the quality of deepfakes improves. There were some easy tells in the beginning: hazy visuals, video corruptions and artifacts, and other flaws. These telltale concerns, on the other hand, are lessening as the cost of adopting technology falls.

There is no perfect way to detect deepfake content, but here are four practical tips:

- Details. Even as deepfake technology improves, there are still some areas where it falls short. Hair movement, eye movement, cheek structures, movement during speaking and artificial facial emotions are all examples of minute features found in films. Eye movement is a key indicator. Although deepfakes can now properly blink (which was once a huge tell), eye movement remains a problem.

- Emotion. Emotion is linked to detail. When someone is making a forceful point, their face will show a variety of emotions as they give the information. Deepfakes are incapable of evoking the same level of emotion as a genuine person.

- Inconsistency. Video quality has never been better. The smartphone in your pocket has the ability to record and transmit 4K video. If a politician makes a statement, it is in front of a recording studio with top-of-the-line technology. As a result, poor recording quality, both visual and audio, is a significant flaw.

- Source. Is the video hosted on a reputable website? Verification is used on social media sites to ensure that globally recognized personalities are not imitated. The systems, to be sure, have flaws. However, knowing where a particularly heinous film is being streamed or housed can help you determine whether it is real or not. You might also attempt a reverse image search to see if the image can be located in other places on the internet.

Deepfake Detection and Prevention Tools

You’re not alone in your battle against deepfakes. Several large tech businesses are working on deepfake detection technologies, while other platforms are taking steps to permanently prevent deepfakes.

Microsoft’s deepfake detection tool, the Microsoft Video Authenticator, for example, analyses a video in seconds and informs the user of its validity. At the same time, Adobe gives you the option of digitally signing content to safeguard it from tampering.

Facebook and Twitter have already prohibited malevolent deepfakes (though deepfakes like Will Smith from The Matrix remain legal), and Google is developing a text-to-speech analysis tool to combat fake audio snippets.

Deepfakes are on their way, and they’re only getting better.

The truth is that since deepfakes became popular in 2018, their major purpose has been to assault women. Whether it’s making false pornography using a celebrity’s face or stripping someone naked on social media, it’s all about exploiting, manipulating, and humiliating women all around the world.

There is no doubt that a deepfake insurgency is on the horizon. Although the rise of such technology poses a threat to the public, there is little that can be done to stop it.