Imagine unlocking the full potential of large language models (LLMs) like ChatGPT and Bard, enhancing their capabilities with access to constantly updated and personalized information. This is the promise of Retrieval Augmented Generation (RAG).

While LLMs are powerful tools, their knowledge is primarily limited by their training data. RAG bridges this gap by seamlessly integrating external knowledge bases, allowing LLMs to deliver more relevant, accurate, and up-to-date responses to your inquiries. This empowers you to make informed decisions, improve your workflow, and unlock a new level of intelligent support.

Let’s delve deeper into the world of RAG and discover how it can transform your LLM experience.

The Information Overload Dilemma

Before the emergence of large language models (LLMs), organizations relied heavily on traditional search and knowledge management tools for information access. However, these tools faced critical challenges as they:

- Lacked context

- Were slow and inefficient

- Time-consuming

- Were siloed

The need for a new approach to information access led to the emergence of large language models (LLMs). LLMs such as ChatGPT and Bard sparked excitement for their potential to respond to complex queries in an intuitive and user-friendly way. They leveraged powerful algorithms to analyze and synthesize data from diverse sources, addressing queries within seconds.

However, powerful they are, LLMs still face limitations:

- They are static: LLMs are ‘stuck’ at a particular time and lack up-to-date information. It is not viable to update their massive training datasets.

- They lack domain-specific knowledge: LLMs are helpful for generalized tasks only. They have no access to a company’s private data.

- They function as black boxes: It is almost impossible to identify the sources LLMs used to arrive at conclusions.

- They are susceptible to hallucinations: LLMs can sometimes invent information or create unrealistic scenarios, which can hinder their reliability and trustworthiness.

ChatGPT, a powerful LLM, has its training data cut off till April 2023. If you ask ChatGPT about anything that occurred last month, it will fail to provide an accurate response. Instead, it will concoct a very convincing-sounding utter lie. This behavior is commonly referred to as hallucination.

Such limitations highlight the need for a technology that can bridge the gap between the impressive capabilities of LLMs and the specific needs of businesses. This is where Retrieval-Augmented Generation (RAG) comes into play.

Introducing RAG – The Revolutionary Tech

Retrieval Augmentation Generation (RAG) is a technology that helps address the common issues of LLMs by offering precise, relevant, and up-to-date information retrieved from an external knowledge base. In other words, RAG fetches updated and context-specific data from an external database and makes it available to an LLM.

Imagine your LLM empowered with the ability to seamlessly access:

- Proprietary enterprise data: Your internal documents, reports, and communications provide a wealth of context and expertise.

- External knowledge sources: Industry reports, news articles, and research papers, keeping your LLM updated with the latest trends and developments.

By integrating with an external knowledge store, RAG significantly minimizes the risk of hallucinations and ensures the accuracy of your LLM’s output. This translates to enhanced decision-making, increased efficiency, and improved customer experience.

Additionally, RAG allows GenAI to cite its sources and improve accountability. Much like research papers, it provides citations for where it obtained an essential piece of data used in its findings.

Understanding the RAG Architecture

The RAG architecture includes the following components:

Data Preparation: It all begins with meticulous data preparation, where document data is gathered alongside metadata. After a thorough PII analysis, documents are split into manageable segments, known as chunks. The optimal chunk size depends on the specific embedding model used and the downstream LLM application that uses this information as context.

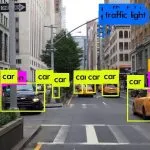

Index Relevant Data: This component extracts valuable information from the prepared data. It generates document embeddings and populates a Vector Search Index. This index acts as a powerful repository, enabling the LLM to efficiently locate relevant information within the knowledge base.

Retrieve Relevant Data: When a user submits a query, this component takes center stage. It analyzes the query and the LLM’s current context, then delves into the Vector Search Index to identify the most relevant information. This ensures the LLM has access to the precise knowledge needed to formulate its response.

Build LLM Applications: Armed with the retrieved information, this component orchestrates the LLM’s response generation. The LLM seamlessly integrates the retrieved knowledge with its existing knowledge base to craft a comprehensive, accurate, and insightful response. This process leverages a simple REST API, making it readily accessible to applications like Q&A chatbots.

How Will RAG Impact Various Industries?

RAG demonstrates incredible potential to revolutionize various industries. Let’s look at some examples here.

- Banking: Banks struggle with time-consuming and expensive credit assessment processes. RAG steps in, revolutionizing the game. By seamlessly combining pre-trained language models with relevant financial data and credit history information retrieved from existing databases, RAG generates accurate and reliable credit assessments in a fraction of the time and cost. This empowers banks to make faster, smarter decisions while saving valuable resources.

- Legal Industry: Legal professionals often drown in a sea of generic information, struggling to find relevant case laws and regulations specific to their client’s needs. RAG changes the game. By tapping into domain-specific knowledge bases, it delivers precise legal insights tailored to each case and jurisdiction.

- Customer Service Industry: RAG supports the customer service industry by delivering delightful customer experiences. It assesses product catalogs, policies, and past interactions to provide context-aware support agents with reliable information in real-time. This empowers them to resolve customer inquiries quickly and accurately.

Future with RAG + LLM & Its Impact on the Workforce in Making it More Efficient

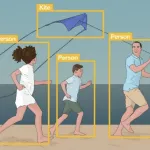

RAG + LLM can empower your workforce with intelligent assistants, capable of accessing and processing vast amounts of data. The duo can revolutionize the workforce in the following ways:

- Improved productivity: RAG-powered LLMs provide employees with instant access to the knowledge they need, allowing them to focus on higher-value tasks.

- Strategic decision-making: RAG can analyze vast amounts of internal and external information and provide access to real-time insights and data-driven recommendations.

- Reduced Costs: By automating routine tasks and streamlining workflows, RAG-powered LLMs can significantly reduce operational costs.

- Constant upskilling and reskilling: Finally, RAG-powered LLMs can act as personalized training tools, providing employees with customized learning pathways and access to expert knowledge. This facilitates continuous upskilling of your workforce so they remain adaptable and future-proof.

Conclusion

RAG is currently one of the best available tools for basing LLMs on the latest and most accurate information. They also lower the cost of having to constantly update and retrain them.

Going forward, integrating RAG into various business apps can drastically boost user experience and information accuracy. It can also help to navigate the complexities of modern AI apps with precision and assurance.