Machine Learning models are everywhere. You probably use these models in your everyday tasks more than you realize. ML is a powerful discipline of computer science that has infiltrated practically every digital device we interact with, including social media, automobiles, and even home appliances. However, training these models is time-consuming and resource-intensive. We need to develop ways to make ML inference easier on smaller, more resource-constrained devices if we want it to expand its reach and open a new era of applications. We need computing platforms that can handle the rate at which machine learning services are being used. TinyML (Tiny Machine Learning) is the result of this pursuit. TinyML is currently one of the hottest technologies in embedded computing. According to ABI Research, a worldwide tech market consultancy group, the TinyML chipset will be used in roughly 230 billion devices by 2030. So, let’s put this into context – What exactly is TinyML? And, perhaps more crucially, what can be done with it?

What is TinyML?

This is a sort of machine learning in which deep learning networks are shrunk to fit on a little piece of hardware. TinyML is a subfield of machine learning and embedded systems that aims to enable ML applications on low-cost, resource and power-constrained devices, like microcontrollers. Its goal is to bring machine learning to the edge in a revolutionary way, allowing battery-powered, microcontroller-based embedded devices to do ML tasks in real-time. It provides model inference at edge devices with low latency, low power, and low bandwidth.

The microcontrollers used in tinyML are incredibly small, measuring only a few millimeters in length and consuming only a few milliwatts of power. They also have relatively limited memory, usually less than a few hundred kilobytes. A typical microcontroller uses milliwatts or microwatts of power, whereas a typical consumer CPU uses 65 to 85 watts of power and a typical consumer GPU uses 200 to 500 watts of power. This corresponds to a 1,000-fold reduction in energy consumption. Because of their low power consumption, TinyML devices can run on batteries for weeks, months, or even years while performing machine learning applications on the edge.

How does TinyML work?

Once the ML model has been trained, it can be compressed without affecting its accuracy. Pruning and knowledge distillation are the two most prominent strategies for post-training compression. Pruning is the process of removing nodes from an algorithm that have little or no benefit in terms of generating accurate and reliable output. Neurons with lower weights are usually eliminated. Before deployment, the network must be retrained on the trimmed architecture.

Information from a large model that has been trained is transferred to a smaller network model with fewer parameters using the knowledge distillation technique. This automatically reduces the size of the knowledge representation, allowing it to be used on devices with limited memory.

Quantization is used to reduce the amount of memory needed to hold numerical quantities, for example, by converting four-byte floating-point numbers to eight-bit integers (1 byte each). The difference between the chip and the embedded device is taken care of via quantization. Finally, the model is built into a format that neural network interpreters can understand and execute.

How can TinyML help?

TinyML provides several unique solutions by summarizing and analyzing data at the edge of low-power devices. It is possible to continuously monitor the machine and predict failures using TinyML on low-powered devices. It may be used for a variety of applications, including imaging microsatellite, wildfire detection, crop disease detection, and animal disease detection.

Industrial predictive maintenance is going to be one of the most popular and impactful use cases. As electricity is less of a limitation in the automobile industry than cost and reliability, TinyML is expected to help industrial environments. This is a significant benefit in industrial settings, where connecting equipment to power is typically more difficult than in our houses. The issue here is that the replacement cycle for industrial machines is relatively long, making innovation more challenging.

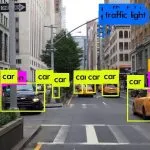

The majority of TinyML is used nowadays in the fields of audio analytics, pattern recognition, and speech human-machine interfaces. TinyML can also be used to monitor store shelves, automatically update stock levels, and send alerts when item quantities reach pre-determined levels in the retail industry. It can be used to track equipment in real-time and send out notifications when app maintenance is required. TinyML sensors strategically placed in high-traffic areas can aid in traffic routing, reducing congestion, increasing emergency response times, and lowering pollution.

Why TinyML?

TinyML’s popularity is skyrocketing with each passing year. It is expected to have a significant impact on practically every industry in the future, including retail, healthcare, agriculture, fitness, and manufacturing. TinyML enables machine learning on low-memory and low-power tiny devices, bringing intelligence to them. AsTinyML is defined as having an energy consumption of less than 1 mW, our hardware platforms must be embedded devices. TinyML will be operated on such devices, which require extremely little power and can perform machine learning algorithms.

A typical IoT device gathers data and transfers it to a cloud-based central server, where hosted machine learning models provide insights. TinyML does away with the need for data to be sent to a central server and opens up new possibilities by bringing intelligence to millions of gadgets that we use on a daily basis. Importantly, it’s doing all this in a way that makes tinyML systems easy to deploy across a wide range of applications, with low cost, low latency, low power, and minimal connectivity needs. TinyML will address an increasing need at the other end of the spectrum, while traditional machine learning continues to advance towards more sophisticated and resource-intensive systems.

Conclusion

TinyML is a new approach to edge computing that investigates the deployment and training of machine learning models on edge devices. When compared to a traditional machine learning algorithm, TinyML algorithms are capable of executing the same operations but with a reduced size and complexity.

As one of the leading machine learning companies in USA, we bring you operational efficiency, enhanced profitability, and total functional visibility at Algoscale, as we extract the essence of information hidden in your complicated and raw data to unleash successful campaign delivery and income opportunities by integrating ML tools into your business.