We live in an era of a digital world where we see everything through a digital glass. Technology has taken over the simplest to earnest tasks for us. The need for another person to be present around us for a simple task is getting slim to none.

Computer vision and deep learning are the basis of what we call our modern era digital monitoring. Whether it is security, learning, or daily lifestyle activities, computer vision helps us monitor correct outcomes without human effort. With cutting-edge technologies in computer vision, we can detect, monitor, and even control the results as we desire.

One of the crucial parts of computer vision is the human body pose estimation. Estimating an entire body includes detecting limb and joints and eliminating light, clothing, and image noise. To do this on top of a live video stream adds to the challenge. Here MediaPipe and OpenCV come into action.

We will discuss what MediaPipe and OpenCV are, how they smooth up the process of human body pose estimation, and how exactly they work. This article will discuss the usage of these technologies to create a tool that helps individuals exercise properly without trainers.

Click to watch our video

What is OpenCV?

OpenCV (Open-source Computer Vision) is an open-source library. It provides a range of tools and services for image processing, extraction, and manipulation. The library is cross-platform and supports Windows, Linux, and macOS along with some other platforms. Some OpenCV applications that help in Pose Estimation include Motion Tracking, Gesture Detection, and Structure from Motion.

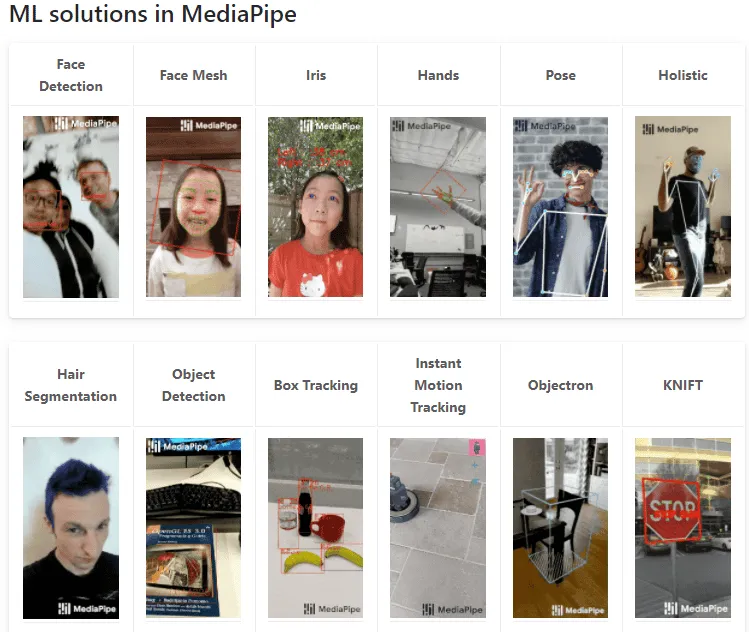

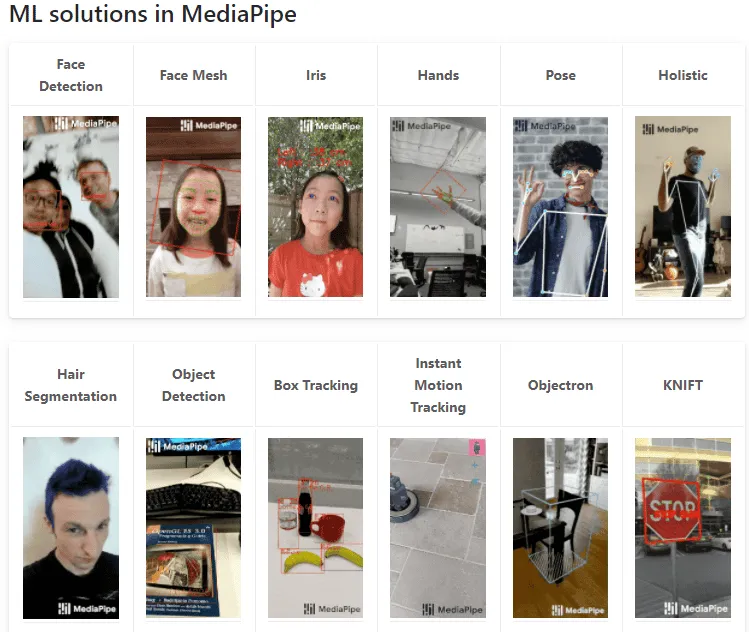

What is MediaPipe?

MediaPipe is also an open-source framework that provides compact, ready-to-use, fast machine learning solutions for live stream media. It provides machine learning solutions – known as pipelines, for cross-platform in multiple languages. Some ML solutions in MediaPipe include facial recognition, iris movement, holistic, pose-estimation, and face mesh.

How does it work?

To use these computer vision technologies, we have to install them in the system or environment that we are working. Depending on the language you want to use, we install OpenCV and MediaPipe using the command prompt.

To use MediaPipe in Python, we can follow commands:

# Using conda

conda install -c conda-forge opencv

# Using pip

pip install mediapipe

pip install opencv

Video Capture using OpenCV

OpenCV library provides a built-in solution to engage a streaming device, capture a video stream, and provide video frames. We can use this by calling the OpenCV VideoCapture library. This library can read frames of video and display them in a window.

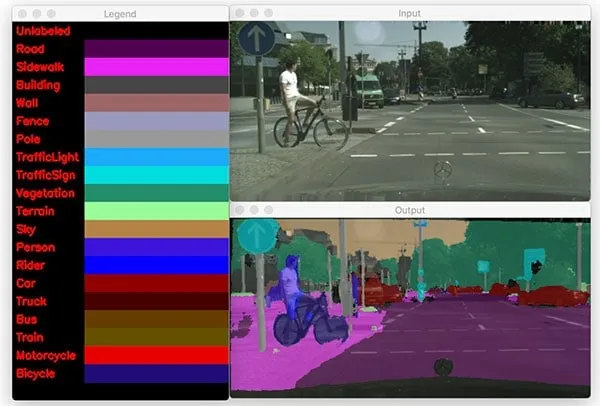

The frames extracted from OpenCV are BGR format. So, we first convert it to RGB format. Once we have our video frames in RGB, we can apply MediaPipe’s Pose on video frames to track body posture during a workout.

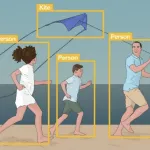

Pose Estimation using MediaPipe’s Pose

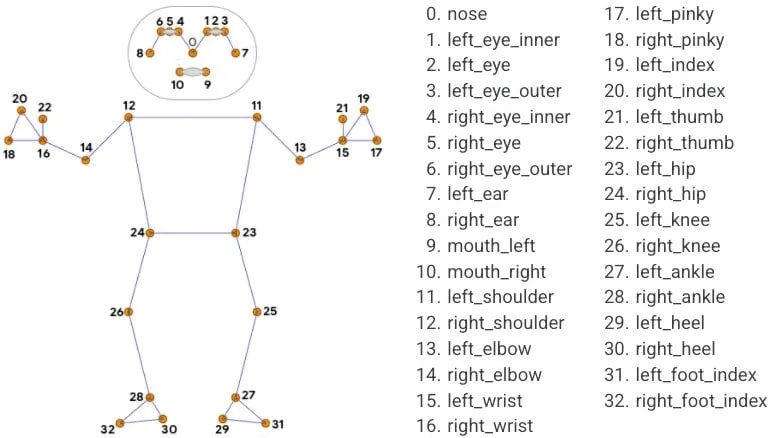

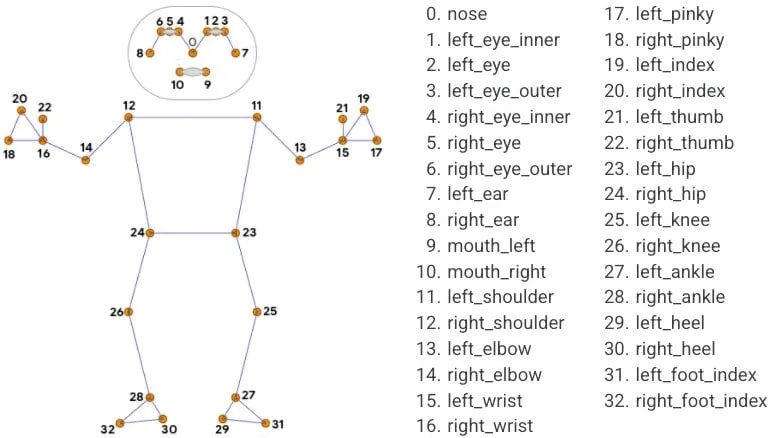

MediaPipe Pose is an ML solution that tracks body pose with precision using 33 landmarks. It utilizes BlazePose research by removing background from a complete RGB frame. Pose-generated pipelines are lightweight opposing conventional ML solutions.

Pose takes in two arguments: detection confidence and tracking confidence. These are simply the strictness of preciseness we want in our resulting pipeline. The pipeline processes the RGB frame and produces an array of landmark coordinates. Each frame updates the landmark coordinates.

Detecting Workout Correctness

After creating the pipeline model, we now compare pre-recorded videos of professional trainers with live-stream. We can identify the correctness of the pose by assigning a score of similarity and difference.

The complete model can be wrapped in a user-friendly application because MediaPipe created solutions are lightweight and mobile-friendly.

Similar Technologies

Believe it or not, MediaPipe is not the only cutting-edge technology in the field of computer vision. With ever-growing open resources, we have hundreds of such tools at hand. We are enlisting some of the most popular computer vision tools that you must know about:

ML Kit

ML Kit is a lightweight APIs powered by Google to help developers. It provides ready-to-use APIs to integrate into an application.

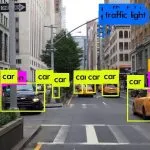

YOLO

YOLO (You Only Look Once) is a state-of-the-art, real-time object detection system. Its version YOLOv3 is a fast and effective system that can process 30 FPS. It uses a 53 layered DNN. You can read more about it here and find out how Algoscale utilizes it.

Raster Vision

Raster Vision builds computer vision models on satellite, aerial, and other large images. This model is best for aerial protection and monitoring.

Algoscale bridging the gap between Computer Vision and AI-Based Businesses

We hope that this article was insightful and enlightening. Algoscale provides businesses with solutions. We help data scientists and tech enthusiasts to attain a ton of knowledge. Information never stops growing so we keep you updated with the latest.

Computer vision is vast. With each new day, businesses are adopting automation technologies. AI and computer vision are becoming the new normal of our business society. Algoscale pioneer in AI, data science, and product engineering. Tools like the one just discussed are part of what Algoscale does best.

Algoscale consults new establishments as well as top-notch enterprises around town. If an enterprise wants to incorporate artificial intelligence, Algoscale is just the place for you. If new blood wants state-of-the-art data science technologies for their start-up, Algoscale will provide the best solution. Best progress sometimes requires sudden changes. Algoscale is here to make those changes seamless, quick, and efficient for you. Whatever help a new business, an old firm, or an up-and-becoming venture requires, Algoscale can provide. Because We Love Data!

Feel free to reach out to get much more insight on how we work, what we do, and what is in the store for you.

Happy Learning!