Listening is one of the most important virtues we look for in human relationships. But do they always listen? Well, not always. However, machines certainly do. I mean Alexa does. You’re talking to a machine when you provide voice instructions to your smart speaker or ask your phone’s voice assistant for the weather report. It’s become commonplace to talk to computers and have them listen to and understand what we’re saying. However, have you ever wondered how such human-machine connections are possible? It is with automatic speech recognition that computers understand natural human language. If you’ve ever used a virtual assistant like Siri or Alexa, you’re familiar with ASR systems. This has forever changed the way we engage with machines by allowing gadgets to understand the context and complexity of human language. The focus of ASR is on using machines to convert spoken words into written ones.

According to Statista, the number of digital voice assistants will reach 8.4 billion by 2024, which is more than the world’s population. The success of Google Home, Amazon Echo, Siri, and other voice assistants in recent years is the most well-known automatic speech recognition example. They not only extract the text, but they also interpret and comprehend the semantic meaning of what was said, allowing them to respond with answers or perform activities in response to the user’s directions. Let’s learn more about the technology of Automatic Speech Recognition.

What is Automatic Speech Recognition?

Automatic speech recognition (ASR) is a feature that allows computer software to convert human speech into text. We can say that it is a real-time voice-to-text conversion system. This technique allows humans to use their voices to communicate with a computer interface in a way that resembles regular human speech in its most advanced forms. Speech-to-text or transcription systems are some other names for ASR.

Read this constructive guide to Conversational AI

How does Automatic Speech Recognition work?

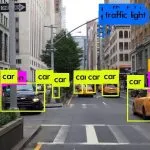

The automatic speech recognition process starts when a computer takes audio input from a human user. The machine then processes the speech by breaking down the components of input and transcribes the speech to text. This technology is used to authenticate users using their speech (biometric authentication) and to carry out an activity based on the human’s commands. It typically requires the primary users’ voices to be pre-configured or preserved. The user must teach the automated speech recognition system by storing their own speech patterns and terminology.

Some systems need to be taught to detect specific keywords and speech patterns. Most ASR systems in most smartphones and tablets work this way only, making them dependent on the speaker. For the voice assistant to learn to recognize your voice, you must say specific words and phrases into your phone before it can begin to work. Other ASR systems are unaffected by the presence or absence of a speaker. No training is required for these systems. Such systems recognize spoken words no matter who is speaking.

Artificial Intelligence in ASR

Speech recognition has had a long history in terms of technology, with multiple waves of significant progress. Advancements in artificial intelligence and big data have most recently boosted the discipline. Speech synthesis for computers has only become possible thanks to the development in AI enabling real-time, two-way dialogues between a person and an electronic device. By self-learning and adapting to changing conditions, artificial intelligence and machine learning algorithms can analyze substantially larger datasets and give improved accuracy. There are numerous ASR software and devices available, but the most advanced options rely on AI and ML. To interpret and analyze human speech, they combine grammar, syntax, structure, and composition of audio and voice signals. They should, in theory, learn as they go, changing their reactions with each engagement.

Natural Language Processing (NLP) is at the heart of the most advanced form of currently available ASR systems. Though it still has a long way to go before reaching its apex of development, we’re already seeing some impressive outcomes in the shape of intelligent smartphone interfaces like Siri, Alexa, and other systems utilized in business and advanced technological contexts. Even with a near 100 percent accuracy, these NLP programs can only accomplish these types of results under ideal settings, such as when the queries directed at them by humans are basic yes or no inquiries with just a limited number of viable responses based on specified keywords.

Find out Top 7 NLP Trends Transforming E-commerce and Online Retail

Automatic Speech Recognition – Use Cases

While the day-to-day applications are innumerous, ASR technology is also revolutionizing the way different industries conduct business. Automated subtitling, smart homes, and in-car voice-command systems all use the technique. The finest of the solutions enable businesses to personalize and adapt this technology to their individual needs, including anything from language and speech subtleties to brand recognition. When hours of audio or video files are converted into searchable transcripts, media and entertainment creators can produce content faster. Educational institutions can provide safe, accessible, remote learning through real-time captioning in video conferencing software; and researchers can begin analyzing qualitative data in minutes thanks to asynchronous, machine-generated transcription. By mandating speech recognition for access to select places, ASR can provide increased security. ASR can also be used to improve accessibility. To capture and log patient diagnoses and treatment notes, doctors and nurses use dictation programs. By allowing voice-activated navigation and search features in car radios, speech recognizers increase driving safety.

Final Words

We discussed a few examples of how speech-to-text software affects society. As ASR technology improves, it’s becoming a more appealing alternative for businesses trying to better serve their consumers in a virtual environment. This technology still has a long way to go before machines can understand and speak with humans in the same way that fellow humans do.

At Algoscale, we employ multiple Machine Learning services and Natural Language Processing techniques to give a unified representation of the business landscape to help organizations attain decision-making agility. You can get your hands on the latest NLP developments and leverage the potential of AI for your organization with our specialized team of professionals.